User Guide

The Difference Machines User Guide for the exhibition features a map, glossary, curatorial essays, selected bibliography, and a note from our curators.

Here you will find interactive guides and other resources that will take you on deep dives into the ideas behind Difference Machines.

The Difference Machines User Guide for the exhibition features a map, glossary, curatorial essays, selected bibliography, and a note from our curators.

The Difference Machines Family Guide features an interactive game to guide users of all ages through the exhibition and to build their own imaginary worlds and technological marvels.

Explore the issues at the heart of Difference Machines with two essays by the exhibition's co-curators University at Buffalo Professor Paul Vanouse and Albright-Knox Assistant Curator Tina Rivers Ryan.

Note: Words in bold are defined in the Glossary below.

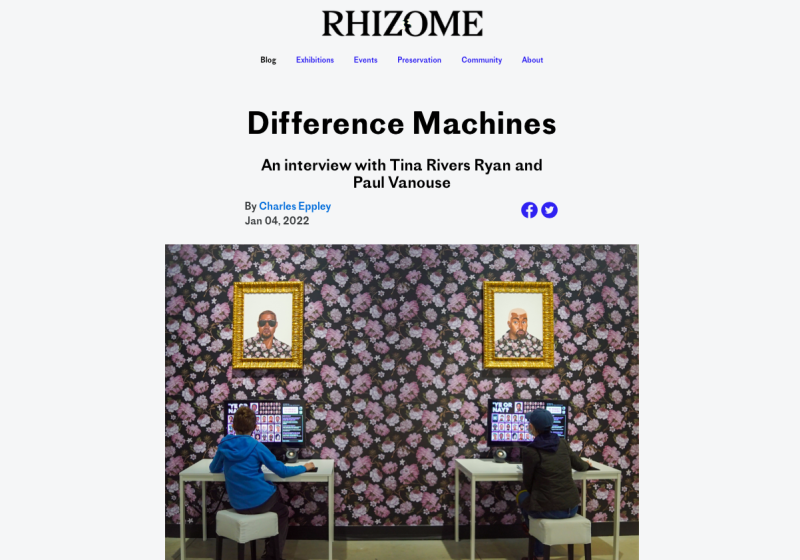

At the entrance to the Albright-Knox Art Gallery’s exhibition Difference Machines: Technology and Identity in Contemporary Art, visitors see four large color photographs by the artist Zach Blas. Each shows the head of a different person who looks towards the camera as if they are posing for a mugshot, but their faces are hidden behind opaque, futuristic-looking plastic shells. Like a superhero’s mask, these digitally-designed sculptures confer a special power: they make their wearers undetectable by the facial recognition programs being used to monitor and police marginalized communities.

To the right of these photographs hangs an enormous banner comprising almost 32,000 individual photographs of mundane details from someone’s life: a plate of food, a receipt, a view from a window. Gathered at this scale, they suggest the enormity of the databases that keep track of every aspect of our lives—with and without our consent. Notably, the artist Hasan Elahi has been sharing these photographs online ever since the FBI wrongly suspected him of being a terrorist and spent six months investigating him in the wake of 9/11—years before the advent of Instagram.

Beyond this banner is an installation of fifty cutouts suspended like a children’s mobile. Each has a life-sized, pixilated photograph of a woman’s body part on one side and a tropical-print fabric on the other. Many of the women are tanned or dark-skinned and wear bikinis, typifying the “exotic” images one might find when searching for women from the Caribbean on the internet. By fragmenting their bodies, the artist Joiri Minaya points to the role algorithms play in perpetuating stereotypes and producing partial images of reality.

The last work on the north side of the building is by the artist Keith Piper, who presents an explicitly dystopian view of technology’s role in identifying and tracking social groups. Four television screens show a Black man’s head rotating in space while a target continuously follows his face. Behind him are matrices of digital numbers, samples of other bodies, and close-ups of maps and newspapers. Pairs of words are overlaid on top of him: “subject object,” “culture ethnicity,” “other boundaries,” “visible difference.” The audio includes snippets from news stories about data protection, civil rights, immigration, and hate crimes, all interspersed with the sound of sirens wailing and a relentless synth beat.

These four works are a fitting introduction to the first major museum exhibition focused on the increasingly complex relationship between the technologies we use and the identities we inhabit. Together, they suggest that technology now plays a major role in capturing and shaping our identities. More than just tools, these technologies are redefining how we see ourselves, and each other. The earliest computers were called “difference engines,” as they were used to calculate the differences between numbers. Today, we are surrounded by “difference machines,” or computers that are used to encode the differences between us.

Difference Machines: Technology and Identity in Contemporary Art includes seventeen of the most important artists and collectives who have explored the aesthetic and social potential of emerging technologies, and especially the relationship between digital technologies and our collective identities.[1] Whether they identify as Black, Latinx, Middle Eastern, South Asian, East Asian, Indigenous, queer, or trans, each of them views technology through their own unique lived experiences and artistic perspectives. The exhibition includes projects that span the last three decades, ranging from software-based and internet art to animated videos, bioart experiments, digital games, and 3-D printed sculptures. Many of them explore how digital systems contribute to the exclusion, erasure, and exploitation of marginalized communities. Others emphasize how digital tools can be repurposed to tell more inclusive stories or imagine new ways of being. Dynamic and interactive, these projects transform the space of the museum into a laboratory for experimenting with our increasingly powerful “difference machines.”

While Difference Machines highlights contemporary artworks that explore the intersection of technology and identity, this introduction aims to sketch their theoretical and historical contexts. The first section discusses how digital tools both empower marginalized communities and amplify systemic inequities. The second section proposes a history of digital art focusing on artists who have explored the concept of difference, and particularly the role that difference plays in our digital systems and on the internet. The essay concludes with an overview of the major themes explored by the artworks in the exhibition.

In a sense, computers have always been tied to how we define our identities. In 1821, Charles Babbage designed his first difference engine, a mechanical calculator that was used to generate large tables of numerical data, and which anticipated his analytical engine—the first general-purpose, programmable computer.[2] By the turn of the twentieth century, some of the largest analog databases in the world were devoted not to mathematical equations, but to census and life insurance records. Aggregating this data allowed governments and corporations to produce statistical information about social groups.[3] When computers first became commercially available in the 1950s, these records were digitized, laying the groundwork for what we call Big Data today. Thus, modern computers were shaped not only by military research conducted during World War II and the Cold War, but also by the peacetime need to quantify and manage our individual and collective identities.[4]

The miniaturization of computers in the 1970s, the popularization of personal computing in the 1980s, and the emergence of the World Wide Web into the 1990s transformed computers from an elite research tool into a consumer appliance and an increasingly ubiquitous part of our everyday lives. Popular culture soon began describing cyberspace as a kind of parallel world that allows us to escape our bodies and assume any identity. As early as 1993, a now-legendary New Yorker cartoon depicted one dog explaining to another, “On the internet, nobody knows you’re a dog,” pointing out the appeal—and absurdity—of what has been described as identity tourism. One early book on avatars even went so far as to argue that on the internet, identity is irrelevant: “one of the best features about life in digital space is that your skin color, race, sex, size, religion, or age does not matter,” it claimed.[5]

But thinking that the internet allows us to change our identity ignores the nuanced social dynamics of cultural difference, as Jennifer González has argued.[6] For example, pretending to be another race online necessarily depends upon and reinforces stereotypes about how a given race might talk or act. More profoundly, identity tourism suggests that identity is merely a superficial “skin” that can be changed as easily as we change clothes, rather than a fundamental part of who we are. Our identities may not be ingrained in our DNA, but they are deeply rooted in the way we interact with the world and experience power and privilege. Furthermore, while it is possible to be anonymous on the internet, the idea that we could totally escape our identities was always fantasy. As Lisa Nakamura noted in her groundbreaking 2002 book Cybertypes: Race, Ethnicity, and Identity on the Internet, even the language of early internet users—who could only represent themselves by using words, not images—reflected their ideas about race and gender.[7] In other words, the way that we use the internet can reinforce as well as resist racial and gendered hierarchies, as Jessie Daniels has argued.[8]

The social media platforms that allow us to express ourselves and find community online have further blurred the distinction between online and offline life. Facebook was the first to require that we use our real names instead of anonymous handles to create profiles, which became the basis of online “social networks” that mirror our pre-existing personal and professional networks. We now constantly and willingly upload all sorts of identifying information, from our gender, age, and sexuality to our family genealogies, medical histories, and even our GPS locations. This data is used to create digital doubles that reflect every dimension of who we are, including our personal preferences and daily habits. Social media sites, news organizations, and streaming services promote specific content to us (and other people like us) based on these doubles, which reinforces the boundaries of identities and subcultures. When users who are all experiencing similar content interact, they create “corners” on the web that can even become their own communities, such as “Black Twitter” or “lesbian TikTok.” In extreme cases, the algorithms that govern these interactions produce filter bubbles, creating progressively narrower echo chambers that can lead to the cultivation of increasingly extreme viewpoints. The recent increase in hate groups, for example, is partly due to how such algorithms facilitate the sharing of white supremacist beliefs and conspiracy theories.

Over the past decades, scholars in various disciplines have explored the evolving relationship between technology and identity. Some chronicle how diverse communities use digital tools. Examples of this work include the essays in the anthology The Intersectional Internet: Race, Sex, Class, and Culture Online; the books by Charlton D. McIlwain and André Brock Jr. on Black networks; Jennifer Gómez Menjívar and Gloria Elizabeth Chacón’s volume on new technologies in Indigenous communities; and Bonnie Ruberg’s book on how queer people experience and design videogames.[9] Other scholars explore the metaphorical and literal intersections of our concepts of identity and technology. Lisa Nakamura examines what she calls “digital racial formation,” or the formation of racial categories through visual digital technologies, while Legacy Russell proposes that the internet can be a space in which we glitch gender codes and Shaka McGlotten connects Black queer life to the mining of data.[10] Some argue that digital tools are themselves “encoded” with ideas about identity. Tara McPherson contends that the principles behind UNIX—an important operating system created in the late 1960s during the civil rights era—“hardwired an emerging system of covert racism into our mainframes and our minds.”[11] Similarly, Jacob Gaboury argues that computational media are inherently heteronormative in their reliance on binary logic and the act of identification.[12] Inspired in part by McPherson, Kara Keeling proposes the idea of a “Queer OS,” or Queer Operating System: a framework for understanding how our media and information technologies shape—and also are shaped by—our identities.[13] Scholars such as Wendy Hui Kyong Chun and Beth Coleman even propose that race itself is a technology that was “coded” during the Enlightenment.[14]

Concurrently, activists fighting for social justice have turned digital technologies into an important part of their practice. Whereas traditional print and broadcast media are one-way communication streams controlled by people with various forms of privilege, digital media require comparatively fewer resources to operate, allowing even marginalized peoples the opportunity to promote their own narratives and build communities (if they can secure access). Famously, in 1994, the Zapatistas—a pro-democracy guerrilla group based in Chiapas, Mexico—coordinated between Indigenous and campesino groups in Chiapas and elsewhere via messages shared on internet listervs and forums.[15] Today, hashtags on social media, such as: #BlackLivesMatter, #TransDayofVisibility, #UsaTuVoz, and #CriptheVote, make identities visible and amplify demands for representation and social or political action.

Marginalized communities also are active in designing technologies according to their own specific needs. For example, disabled people have a long history of hacking or inventing their own technologies, from mobility aids to health-tracking programs, producing what is now referred to by some as CripTech.[16] These projects are a counterpoint to the commercial devices that are designed by able-bodied people for disabled people, many of which are what the activist and designer Liz Jackson calls disability dongles: elegant and typically expensive technologies that fail to address the most important challenges that disabled people face.[17]

While they can be empowering, digital tools also play a role in perpetuating—and even exacerbating—systemic forms of oppression. At the most basic level, many marginalized communities do not have equal access to computers (a situation referred to as the digital divide), which can limit academic and professional opportunities, especially with the recent explosion of remote schooling and working from home.[18] These same communities also may not have access to high-speed internet: because internet access is not considered a utility (like water or electricity), internet service providers are allowed to choose where they want to invest in their infrastructure. As a result, they often do not invest in places that may be less profitable for them, like municipal housing developments or Native American reservations. This practice is known as digital redlining, as it echoes how banks and other businesses can offer inferior services to underprivileged neighborhoods.[19] Because of the digital divide and digital redlining, both the perils and the promises of the internet are not evenly distributed.

In recent years, the way that databases and algorithms themselves contribute to systemic inequity has been met with greater public scrutiny, thanks in part to exposés such as the 2020 documentary Coded Bias. Increasingly, these tools influence decisions that impact our entire lives, from the grades we receive to the medical care we are provided and the jobs we are offered.[20] Even our justice system has begun using algorithms to predict crimes (known as predictive policing) and determine sentences based on the calculated likelihood that defendants will commit a crime again (known as algorithmic risk assessment). These algorithms are notoriously discriminatory, giving rise to what Ruha Benjamin calls “the New Jim Code.”[21] For example, in the spring of 2019, research in the American Criminal Law Review revealed that the most popular risk assessment program discriminates against Hispanic defendants, and in January 2020, an innocent Black man named Robert Julian-Borchak Williams was arrested because a facial recognition system wrongly matched him to surveillance footage of a robbery suspect.[22]

The problem with such digital tools is not just how they are used; it is also how they are designed. In her 2018 book Algorithms of Oppression: How Search Engines Reinforce Racism, Safiya Umoja Noble coined the term algorithmic oppression to describe how software programs contribute to systemic inequities—even in their very coding. “While we often think of terms such as ‘big data’ and ‘algorithms’ as being benign, neutral, or objective, they are anything but. The people who make these decisions hold all types of values, many of which openly promote racism, sexism, and false notions of meritocracy,” she writes.[23] This is a problem because algorithms and databases are only as good as the information that is fed to them. For example, engineers “train” facial recognition algorithms using databases of faces, but if they fail to use diverse databases of images, the algorithms used by law enforcement to identify suspects (to cite one example) are more likely to produce false identifications of non-white subjects. This is why organizations such as the Algorithmic Justice League, Data for Black Lives, and Data & Society are increasing awareness about the uses and abuses of digital technologies and demanding greater transparency, accountability, and regulation in the technology sector.

Today’s conversations about the intersection of technology and identity resonate with the history of digital art, which has always been tied to the social uses of digital tools. The development of Western art has long depended on artists embracing new tools, from Albrecht Dürer’s drawing grids to Vincent van Gogh’s use of commercial paint tubes. In the 1960s, researchers at companies such as Bell Labs and Siemens realized they could use software programs to produce abstract and figurative patterns, pioneering what was then known as computer art.[24] But by then, “art” and “technology” seemed to be opposed terms, reflecting a division between the “two cultures” of the humanities and the sciences.[25] The technological optimism of the Space Age was met by a technophobic backlash driven by the moral horror of nuclear warfare, the economic consequences of automation, the environmental costs of resource extraction, and the existential threat of artificial intelligence. As an integral part of the so-called military-industrial complex that had been developing since the 1950s, technology seemed incompatible with the humanistic ideals espoused by many in the art world.[26] The sculptor Richard Serra famously wrote in 1970, “Technology is what we do to the Black Panthers and the Vietnamese under the guise of advancement in a materialistic theology.”[27]

From the 1970s into the 1980s, artists working with computers and other forms of technology were widely suspected of being complicit with—or at the very least, insufficiently critical of—a newly technocratic society. It is true that many artists working with these tools focused primarily on developing new aesthetic forms, such as interactive texts, computer-generated paintings, and virtual environments. But many also addressed the role of technology in shaping identity and community in a globalized world. Some of the most important early video artists, for example, were women who used newly accessible video recording and editing techniques to critique the sexism of mass media film and television. Most famously, Dara Birnbaum’s Technology/Transformation: Wonder Woman, 1978–9, deconstructs the television show’s supposedly feminist representation of a powerful (and even “technological”) woman. Other artists who were early adopters of technology explored its capacity to bring communities and cultures together. Nam June Paik and John Godfrey’s iconic video Global Groove, 1973, celebrates the idea of using telecommunications systems to create global creative networks, as seen in its mash-up of performances by Koreans and Americans.

In the 1990s—in the wake of the culture wars and against the backdrop of the emergence of identity politics—a new generation of artists began using digital technologies to highlight the complexities of systemic bias, marginalization, and oppression.[28] In 1993, the group (RT)mark, operating as the Barbie Liberation Organization, switched the voice boxes in some of Mattel’s Barbie and G.I. Joe dolls so that the former uttered phrases like “vengeance is mine,” while the latter excitedly asked if we would like “to go shopping.”[29] The group secretly returned the dolls to store shelves so that unsuspecting families would be forced to consider how we teach our children to perform gender stereotypes. Ken Gonzales-Day’s digitally-composited photographic series The Bone Grass Boy: The Secret Banks of the Conejos River, c. 1996, features the fictitious Indigenous and Latina two-spirit character Ramoncita, whose body queers and decolonizes our cultural imagination of the nineteenth-century American frontier.[30] The multi-ethnic British group Mongrel similarly used digital editing to produce photographs that questioned national myths about race as part of their project National Heritage, 1997–99, swapping the skins of faces using visible sutures. Produced by Graham Harwood (one of Mongrel’s members) in close collaboration with the staff and patients of a high-security psychiatric hospital, the interactive CD-ROM and installation Rehearsal of Memory, 1996, allows users to “rehearse” the traumatic memories of people sentenced to asylum. Tamiko Thiel and Zara Houshmand’s Beyond Manzanar, 2000, resurrects a different kind of traumatic memory, reimagining the first internment camp built for Japanese Americans during World War II as a virtual landscape. As a public art project, Ricardo Miranda Zúñiga’s Vagamundo, 2002, presented the specific plight of undocumented Mexicans in New York as a videogame that can be played either online or on a mobile cart.

As the web emerged, it allowed artists to not only make art, but also raise the visibility of their communities. As early as 1991, the Australian collective VNS Matrix authored A Cyberfeminist Manifesto for the 21st Century, which they circulated in the form of fax messages, emails, posters, and billboards.[31] Using suggestive language that parodied what is now called techbro culture, they declared themselves “saboteurs of big daddy mainframe,” protesting the default association of digital technology with (white) men and advancing the idea of a feminist technoculture.32 In 1996, the Kanien’kehá:ka (Mohawk) artist Skawennati founded CyberPowWow, a website and series of chat rooms devoted to contemporary Indigenous art that subsequently evolved into AbTeC (Aboriginal Territories in Cyberspace), a network for promoting the representation of Indigenous people in virtual worlds such as Second Life.[33] From 1999–2004, the Uruguayan artist Brian Mackern maintained a “netart latino database” (itself an artwork) to highlight net art projects by Latinx artists. The landing page displayed Mackern’s ASCII interpretation of América Invertida, a 1943 drawing by Joaquín Torres García that inverts North and South America, making the latter the privileged term.[34]

Many artists belonging to this first generation of net art targeted the idea that we leave behind our bodies and identities when we go online. In response to the increasing popularity of virtual avatars, Victoria Vesna’s 1996 website Bodies© Incorporated allowed visitors to assemble their own virtual bodies from body parts that recognizably belonged to different genders, races, and ages—but only after signing away the rights to their avatars. The work suggested that even our virtual identities are tied to bodies that belong to cultural value systems, including legal ones.[35] Roshini Kempadoo’s website Sweetness and Light, commissioned for a 1996 project about technology and colonization called La Finca/The Homestead, explicitly connected the rush to colonize the new “territories” of cyberspace to the history of European colonization. In both, maximizing profits depends on the maintenance of racial hierarchies and power asymmetries. Shu Lea Chang’s Brandon, 1998–99, was a complex interactive project combining texts, images, virtual and real spaces, and performances displayed across various interfaces.[36] In response to the murder of trans man Brandon Teena and a virtual assault that took place in a chat room (both in 1993), Brandon presented gender as a social code that programs our experience of violence and power both offline and online. Guillermo Gómez-Peña and his collaborator Roberto Sifuentes made a similar claim about race and ethnicity in a website they launched around 1994 that was based on their live performance-installations called Temple of Confessions. The site invited anonymous internet users to “Confess Your Intercultural Cyber-Sins,” including “your fears, desires, fantasies and mythologies regarding the Latino and the indigenous other,” suggesting that we don’t leave our prejudices behind when we go online— and that the internet might actually amplify them.[37]

These many artworks demonstrate that artists—including many diverse artists—who work with digital technologies have long considered the complex relationship between technology and difference. Unfortunately, as is true across contemporary art, these artists often have not been valued beyond their communities, thanks to the systemic biases of institutions including art museums and galleries. In 1999, the art historian María Fernández wrote about the distinct ways that people associated with technology and with art tend to understand identity, focusing on the artists who bridge that gap, including Keith Piper and Rafael Lozano-Hemmer (both artists in Difference Machines), as well as Difference Machines co-curator Paul Vanouse.[38] The highly specific history of digital art outlined above builds on her work, as well as on texts by Jennifer Chan, Kimberly Drew, Ben Valentine, Aria Dean, and Lila Pagola, among others.[39] This account also draws on a few pioneering exhibitions that are rarely mentioned alongside more general surveys of art and technology from the dot-com era. These include the aforementioned The Homestead/La Finca, which was organized by Paul Hertz in 1996 and presented again under the title Colonial Ventures in Cyberspace in 1997, and Race in Digital Space, organized by Erika Dalya Muhammad at the MIT List Visual Arts Center in 2001. In the introduction to his show, Hertz noted, “Only a fraction of the world’s people have a presence in cyberspace: the rest are outsiders. Will the outsiders eventually participate? Will borders and differences persist in cyberspace? Who decides these issues?”[40] Almost twenty-five years later, cyberspace’s relationship to difference has only become more complex. With so much at stake, it is all the more urgent to rewrite the decades-long history of contemporary art that deals with technology and identity for the present.

In his “First Law of Technology,” Melvin Kranzberg stated: “Technology is neither good nor bad; nor is it neutral.”[41] These words were written in 1986; today, they perfectly describe the digital technologies that shape how we understand and experience our differences. As digital tools continue infiltrating every aspect of our lives in ways that are both obvious and obscure, Difference Machines: Technology and Identity in Contemporary Art invites us to pause and consider: How does technology shape our identities? More specifically: How does technology shape the way we understand the differences between us, including our race, ethnicity, gender, sexual orientation, and dis/ability? And how does technology contribute to—or allow us to resist—the systemic marginalization and oppression of people with certain identities? Art in particular can help us answer these questions by presenting technology and identity in a new light and creating space for them to be imagined differently. The works on view in this exhibition are particularly relevant to our historical moment, as we decide what role we want technology to play in our lives and in our communities while we strive to build a more equitable future.

Several works in the exhibition foreground the idea that identity categories based on physical attributes are not “natural,” but rather, are shaped by society—including by our technologies. One of the earliest works in the show is Mongrel’s Heritage Gold, 1997, a hack of Photoshop 1.0 that transforms identities into filters that can be applied at will, anticipating the widespread use of face filters today. A.M. Darke’s ‘Ye or Nay?, 2020, is an adaptation of the game “Guess Who?,” with one significant twist: all of the figures are Black male celebrities. In producing verbal descriptions of each man, the players perform the same acts of categorization that are central to the construction of collective identities, while also producing the kind of metadata that are tracked by Big Data. Rian Hammond’s Root Picker, 2021, exposes gender as being itself a kind of “code” that has been “programmed” by the biological sciences, the pharmaceutical industry, and colonialism. Joiri Minaya’s #dominicanwomengooglesearch, 2016, reveals that while the eroticization and exoticization of Latinx women has a long history, the internet is now amplifying these stereotypes.

Many artworks are particularly focused on the increasing use of digital technologies to perform surveillance, which can have a more negative impact on marginalized communities. In his 1992 work Surveillance: Tagging the Other, Keith Piper sounds the alarm about the use of digital surveillance to tag certain identities, and especially the United Kingdom’s Black subjects, as being “Other,” extending the long history of the surveillance and classification of Black people.[42] Hasan Elahi’s Thousand Little Brothers, 2014, includes photos from the artist’s ongoing digital self-surveillance, which he began after being wrongly interrogated by the FBI following 9/11— an event that contributed to a massive erosion of the right to privacy, especially for immigrants, Muslims, and people of color. Morehshin Allahyari’s Material Speculation: ISIS, 2015–16, and South Ivan Heads, 2017, protest both the destruction of Middle Eastern cultural heritage by ISIS and the colonialist “capture” of that heritage by Western companies that transform it into their intellectual property. Zach Blas produced the masks in Facial

, 2012–14, in conjunction with a series of workshops he organized about the dangers of biometric surveillance for women, gay men, and Black, Latinx, and Indigenous people, “weaponizing” surveillance against itself while suggesting that collective identities, including the ones constructed by technology, might themselves be “masks” that hide our individuality.

While being visible within digital systems can make marginalized people vulnerable to surveillance, not being visible enough can become its own problem, too. Another recurring topic in the exhibition is the erasure of marginalized communities through digital technologies—whether by accident or by design. Mendi + Keith Obadike’s early net art projects Blackness for Sale, 2001, and The Interaction of Coloreds, 2002/2018, deploy satire to highlight the literal and metaphorical whiteness of our digital tools. In Level of Confidence, 2015, Rafael Lozano-Hemmer uses facial recognition technology to perform a futile search for the forty-three students who were kidnapped in Iguala, Mexico in 2014. By redirecting this form of surveillance, he underscores that technology is more often used to erode civil rights than address the needs of victims of injustice. For Insufficient Memory, 2020, Sean Fader used his own photographs and texts to create an interactive database of the murder victims of LGBTQ+ hate crimes, highlighting the “insufficiency” of the stories we use data to tell. Danielle Brathwaite-Shirley’s WE ARE HERE BECAUSE OF THOSE THAT ARE NOT, 2020, uses game mechanics to teach the importance of archiving the lives and experiences of Black trans women. Both Fader and Brathwaite-Shirley’s projects acknowledge that being visible can be traumatic and even dangerous for LGBTQ+ people, while also insisting that being excluded from digital systems is its own kind of violence.

Given the relationship between technology and inequity, many of the artists in the show emphasize the importance of reasserting control over the digital technologies that shape our identities. Skawennati’s video She Falls for Ages, 2017, occupies the platform Second Life to retell the traditional Haudenosaunee (Iroquois) creation story as a science fiction narrative. This prompts us to imagine Indigenous people as belonging to the future and not just the past, exemplifying what Anishinaabe author Grace Dillon calls Indigenous Futurism.[43] Sondra Perry’s IT’S IN THE GAME…, 2018, which is based on the economic exploitation of her brother’s likeness by an NCAA Basketball videogame, attempts to reclaim his identity and agency through both technology and art. By questioning a Black female robot named Bina48 about topics such as racism, Stephanie Dinkins points out that the development of artificial intelligence raises profound questions about what it means to be human. Because the answers are necessarily influenced by racism, sexism, ageism, and other biases, AI must be shaped by input from diverse perspectives. Lior Zalmanson’s Excess Ability, 2014, uses Google’s error-prone auto-transcription software on the video of a publicity event in which the company claimed the technology would increase accessibility for the Deaf and hard of hearing. By highlighting the software’s flaws, the video critiques what Meredith Broussard describes as technochauvinism, and suggests the importance of creating technology outside of the limits of Silicon Valley.[44]

With her new video installation Landscape of Anticipation 2.0, 2021, Saya Woolfalk proposes a radical future for technology—and also for identity. The sci-fi figures we meet in this work belong to the artist’s imagined population of chimerical Empathics. These humanoids appear to defy categorization and embrace the idea of hybridity; one might say that they empathize so much with other organisms like plants and animals that they become them. In the tradition of Afrofuturism, Woolfalk’s work, like Skawennati’s, helps us see racialized bodies as belonging to the future (not just the past), and as agents (not just the subjects) of technology. In this utopian world, differences between bodies continue to exist, but are not so easily articulated into rigid, easily identifiable categories. Woolfalk’s work therefore allows us to imagine difference without oppression—or amnesia. Art always has helped us imagine possible futures. What landscape could we anticipate more eagerly than this?

The quotations by the artists in Difference Machines that appear throughout this essay are excerpted from interviews conducted on the occasion of this exhibition, which may be viewed in the exhibition and on the Albright-Knox website.

[1] Other contemporary artists who have produced work relating to this topic include Sophia al-Maria, American Artist, micha cárdenas, Andrea Crespo, Caitlin Cherry, Aria Dean, Heather Dewey-Hagborg, Carla Gannis, Claudia Hart, Matthew Angelo Harrison, Shawné Michaelain Holloway, Ryan Kuo, Lynn Hershman Leeson, Juliana Huxtable, Josh Kline, Carolyn Lazard, Cannupa Hanska Luger, LaJuné McMillian, Jayson Musson, Rashaad Newsome, Mimi Onuoha, Tabita Rezaire, Antonio Roberts, Alfredo Salazar-Caro, Jacolby Satterwhite, Stephanie Syjuco, Theo Triantafyllidis, Lawrence Paul Yuxweluptun, and Amelia Winger-Bearskin—among many others.

[2] See “The Engines,” Computer History Museum, updated 2021, computerhistory.org/babbage/engines/.

[3] See, for example, JoAnne Yates, Structuring the Information Age: Life Insurance and Technology in the Twentieth Century (Baltimore: Johns Hopkins University Press, 2009).

[4] On the military origins of computing, see Paul N. Edwards, The Closed World: Computers and the Politics of Discourse in Cold War America (Cambridge, MA: MIT Press, 1996). On the quantification of daily life, see Jacqueline Wernimont, Numbered Lives: Life and Death in Quantum Media (Cambridge, MA: MIT Press, 2019) and Jer Thorp, Living in Data: A Citizen’s Guide to a Better Information Future (New York: Farrar, Straus and Giroux, 2021).

[5] Bruce Damer, Avatars!: Exploring and Building Virtual Worlds on the Internet (Berkeley, CA: Peachpit Press, 1997), 136.

[6] Jennifer González, “The Face and the Public: Race, Secrecy, and Digital Art Practice,” in “Race and/as Technology,” ed. Wendy Hui Kyong Chun, special issue, Camera Obscura: Feminism, Culture, and Media Studies 70, vol. 24, no. 1 (2009): 37–65. Reprinted in Anna Dezeuze, ed., The “Do-It-Yourself” Artwork: Participation from Fluxus to New Media (Manchester: Manchester University Press, 2012), 185 –205.

[7] Lisa Nakamura, Cybertypes: Race, Ethnicity, and Identity on the Internet (New York: Routledge, 2002).

[8] Jessie Daniels, “Rethinking Cyber-Feminism(s): Race, Gender, and Embodiment,” in “Technologies,” special issue, Women’s Studies Quarterly 37, no. 1/2 (Spring/Summer 2009): 101–24.

[9] Safiya Umoja Noble and Brendesha M. Tynes, eds., The Intersectional Internet: Race, Sex, Class, and Culture Online (New York: Peter Lang, 2016); Charlton D. McIlwain, Black Software: The Internet and Racial Justice, from the AfroNet to Black Lives Matter (New York: Oxford University Press, 2020); André Brock Jr., Distributed Blackness: African American Cybercultures (New York: New York University Press, 2020); Jennifer Gómez Menjívar and Gloria Elizabeth Chacón, eds., Indigenous Interfaces: Spaces, Technology, and Social Networks in Mexico and Central America (Tucson: The University of Arizona Press, 2019); and Bonnie Ruberg, Video Games Have Always Been Queer (New York: New York University Press, 2019). For general (though now dated) overviews of some of the models of thinking about race and the internet, see Jessie Daniels, “Race and Racism in Internet Studies: A Review and Critique,” new media & society 15, no. 5 (2012): 695–719, and Amber M. Hamilton, “A Genealogy of Critical Race and Digital Studies: Past, Present, and Future,” Sociology of Race and Ethnicity 6, no. 3 (2020): 292–301.

[10] Lisa Nakamura, Digitizing Race: Visual Cultures of the Internet (Minneapolis: University of Minnesota Press, 2007), 14; Legacy Russell, Glitch Feminism: A Manifesto (London: Verso Books, 2020); Shaka McGlotten, “Black Data,” in No Tea, No Shade: New Writings in Black Queer Studies, ed. E. Patrick Johnson (Durham: Duke University Press, 2016), 262–87. One example of Nakamura’s “digital racial formation” is the link between racial categories and morphing software as described by Evelynn M. Hammonds; see her “New Technologies of Race,” in The Gendered Cyborg: A Reader, ed. Gill Kirkup et al. (New York: Routledge, 2000), 305–17.

[11] Tara McPherson, “Why Are the Digital Humanities So White? or Thinking the Histories of Race and Computation,” in Debates in the Digital Humanities, ed. Matthew K. Gold (Minneapolis: University of Minnesota Press, 2012), dhdebates.gc.cuny.edu/projects/debates-in-the-digital-humanities.

[12] Jacob Gaboury, “Becoming NULL: Queer Relations in the Excluded Middle,” Women & Performance: A Journal of Feminist Theory 28, no. 2 (2018): 143–58.

[13] Kara Keeling, “Queer OS,” Cinema Journal 53, no. 2 (Winter 2014): 152–57. See also Fiona Barnett et al., “QueerOS: A User’s Manual,” in Debates in the Digital Humanities 2016, eds. Matthew K. Gold and Lauren F. Klein (Minneapolis: University of Minnesota Press, 2016), dhdebates.gc.cuny.edu/projects/debates-in-the-digital-humanities-2016.

[14] Wendy Hui Kyong Chun, “Introduction: Race and/as Technology; or, How to Do Things to Race,” and Beth Coleman, “Race as Technology,” in “Race and/as Technology,” special issue, Camera Obscura: 6–35, 177–207.

[15] See, for example, Harry Cleaver, “The Zapatistas and the Electronic Fabric of Struggle,” in Zapatista! Reinventing Revolution in Mexico, eds. John Holloway and Eloína Peláez (London: Pluto Press, 1998), 81–103.

[16] On CripTech, see Vanessa Chang and Lindsey D. Felt, “Recoding CripTech,” SOMArts Cultural Center 2020, recodingcriptech.com and “CripTech Incubator,” Leonardo, last modified April 23, 2021, leonardo.info/criptech.

[17] On disability dongles, see s.e. smith, “Disabled people don’t need so many fancy new gadgets. We just need more ramps,” Vox, April 30, 2019, vox.com/first-person/2019/4/30/18523006/disabled-wheelchair-access-ramps-stair-climbing.

[18] On the digital divide, see Bhaskar Chakravorti, “How to Close the Digital Divide in the U.S.,” Harvard Business Review July 20, 2021, hbr.org/2021/07/how-to-close-the-digital-divide-in-the-u-s.

[19] Shara Tibken, “The Broadband Gap’s Dirty Secret: Redlining Still Exists in Digital form,” Cnet, June 28, 2021, cnet.com/features/the-broadbandgaps-dirty-secret-redlining-still-exists-in-digital-form/.

[20] These problems are not mere hypotheticals. In October 2019, a paper in the journal Science revealed that an algorithm used by medical centers and hospitals to allocate care was discriminating against Black patients. In August 2020, the United Kingdom used a program to guess what students would have scored on exams that were cancelled due to COVID; the program factored in where they lived, automatically lowering the grades of students from underprivileged neighborhoods. For more examples, see the Further Resources below.

[21] Ruha Benjamin, Race After Technology: Abolitionist Tools for the New Jim Code (Cambridge, UK: Polity Press, 2019). See also Ruha Benjamin, ed., Captivating Technology: Race, Carceral Technoscience, and Liberatory Imagination in Everyday Life (Durham: Duke University Press, 2019).

[22] Kashmir Hill, “Wrongfully Accused by an Algorithm,” The New York Times August 3, 2020, nytimes.com/2020/06/24/technology/facial-recognition-arrest.html; Melissa Hamilton, “The Biased Algorithm: Evidence of Disparate Impact on Hispanics,” American Criminal Law Review 56, no.4 (Fall 2019): 1533–77.

[23] Safiya Umoja Noble, Algorithms of Oppression: How Search Engines Reinforce Racism (New York: New York University Press, 2018), 1–2.

[24] On the early history of digital art, see Grant D. Taylor, When the Machine Made Art: The Troubled History of Computer Art (New York: Bloomsbury, 2014).

[25] The term “the two cultures” was first popularized in 1959 by C.P. Snow and instantly became an influential idea, although it has since been widely criticized as an over-simplification. See C.P. Snow, “Two Cultures,” Science 130, no. 3373 (1959): 419.

[26] See Anne Collins Goodyear, “Expo ’70 as Watershed: The Politics of American Art and Technology,” in Cold War Modern: Design 1945–1970, eds. David Crowley and Jane Pavitt (London: Victoria and Albert Museums, 2008), 197–203.

[27] Gail R. Scott, “Richard Serra,” in A Report on the Art and Technology Program of the Los Angeles County Museum of Art, 1967–71, ed. Maurice Tuchman (Los Angeles: Los Angeles County Museum of Art, 1971), 300. Tellingly, while acknowledging the way that technology contributes to the oppression of Black and Vietnamese people, Serra’s words might imply that they do not also have technology themselves. This perpetuates the idea of racialized “Others” as being sub-human, given the close identification of humanity with the ability to make tools.

[28] An excellent introduction to this field is Christiane Paul, Digital Art, 3rd edition (London: Thames and Hudson, 2015).

[29] See David Firestone, “While Barbie Talks Tough, G.I. Joe Goes Shopping,” The New York Times, December 31, 1993, nytimes.com/1993/12/31/us/while-barbie-talks-tough-g-i-joe-goes-shopping.html.

[30] See Ken Gonzales-Day, “The Bone-Grass Boy,” kengonzalesday.com/archive/the-bone-grass-boy/.

[31] VNS Matrix comprises Josephine Starrs, Julianne Pierce, Francesca da Rimini, and Virginia Barratt.

[32] See VNS Matrix, “A Cyberfeminist Manifesto for the 21st Century,” Net Art Anthology, Rhizome, 1991, anthology.rhizome.org/a-cyber-feminist-manifesto-for-the-21st-century.

[33] AbTeC now lives at abtec.org. See Rea McNamara, “Skawennati Makes Space for Indigenous Representation and Sovereignty in the Virtual World of Second Life,” Art in America, July 1, 2020, artnews.com/art-in-america/features/skawennati-abtec-island-indigenous-community-second-life-1202693110/.

[34] Brian Mackern, “Netart Latino Database,” Net Art Anthology, Rhizome, 1999–2004, anthology.rhizome.org/netart-latino-database. On art and technology, including digital art, in Latin America, see María Fernández, ed., Latin American Modernisms and Technology (Trenton, NJ: Africa World Press, 2018).

[35] An emulation of this work can be accessed via Victoria Vesna, “Bodies©Incorporated,” Net Art Anthology, Rhizome, 1996, anthology.rhizome.org/bodies-incorporated. See also Jennifer González, “The Appended Subject: Race and Identity as Digital Assemblage,” in Race in Cyberspace, eds. Beth E. Kolko, Lisa Nakamura, and Gilbert B. Rodman (New York: Routledge, 2000), 27–50.

[36] The project was recently conserved and is now available online again, albeit without the live elements, at Shu Lea Cheang, “Brandon: A One Year Narrative Project in Installments,” Guggenheim, brandon.guggenheim.org. See also Shu Lea Cheang, “Brandon,” Net Art Anthology, Rhizome, 1998–1999, anthology.rhizome.org/brandon.

[37] The original website, parts of which can still be accessed via the Internet Archive, was echonyc.com/~confess/. See Thomas Foster, “Cyber-Aztecs and Cholo-Punks: Guillermo Gómez-Peña’s Five-Worlds Theory,” in “Mobile Citizens, Media States,” ed. Carlos J. Alonso, special topic, PMLA 117, no. 1 (January 2002): 43–67. See also Evantheia Schibsted, “Confessions of a Webback,” Wired, January 1, 1997, wired.com/1997/01/ffpena/.

[38] María Fernández, “Postcolonial Media Theory,” Art Journal 58, no. 3 (Autumn 1999): 58–73.

[39] See Jennifer Chan, “Why Are There No Great Women Net Artists?: Vague Histories of Female Contribution According to Video and Internet Art,” Mouchette, 2011, about.mouchette.org/wp-content/uploads/2012/05/Jennifer_Chan2.pdf; Kimberly Drew, “Towards a New Digital Landscape,” SuperScript, May 11, 2015, walkerart.org/magazine/equity-representation-future-digital-art; Ben Valentine, “Where Are the Women of Color in New Media Art?,” Hyperallergic, April 7, 2015, hyperallergic.com/195049/where-are-the-women-of-color-innew-media-art/; Aria Dean, “Blackness in Circulation: A History of NetArt,” in The Art Happens Here: Net Art Anthology, ed. Michael Connor with Aria Dean and Dragan Espenschied (New York: Rhizome, 2019), 394-99; and Lila Pagola, “Netart Latino Database: The Inverted Map of Latin American Net Art,” in The Art Happens Here, 400–5.

[40] Paul Hertz, “Colonial Ventures in Cyberspace,” Leonardo 30, no. 4 (1997): 249–59.

[41] Melvin Kranzberg, “Technology and History: ‘Kranzberg’s Laws,’” Technology and Culture 27, no. 3 (July 1986): 545.

[42] On the relationship between Blackness and surveillance, see Simone Browne, Dark Matters: On the Surveillance of Blackness (Durham: Duke University Press, 2015).

[43] See Indigenous Futurisms: Transcending Past/Present/Future (Santa Fe: IAIA Museum of Contemporary Native Arts, 2020).

[44] Meredith Broussard, Artificial Unintelligence: How Computers Misunderstand the World (Cambridge, MA: MIT Press, 2018).

Barnett, Fiona, Zach Blas, micha cárdenas, Jacob Gaboury, Jessica Marie Johnson, and Margaret Rhee. “QueerOS: A User’s Manual.” In Debates in the Digital Humanities 2016, edited by Matthew K. Gold and Lauren F. Klein. Minneapolis: University of Minnesota Press, 2016. dhdebates.gc.cuny.edu/projects/debates-in-the-digital-humanities-2016.

Benjamin, Ruha. Race After Technology: Abolitionist Tools for the New Jim Code. Cambridge, UK: Polity Press, 2019.

———, ed. Captivating Technology: Race, Carceral Technoscience, and Liberatory Imagination in Everyday Life. Durham: Duke University Press, 2019.

Brock, André, Jr. Distributed Blackness: African American Cybercultures. New York: New York University Press, 2020.

Browne, Simone. Dark Matters: On the Surveillance of Blackness. Durham: Duke University Press, 2015.

Chan, Jennifer. “Why Are There No Great Women Net Artists?: Vague Histories of Female Contribution According to Video and Internet Art.” Mouchette. 2011. about.mouchette.org/wp-content/uploads/2012/05/Jennifer_Chan2.pdf.

Chun, Wendy Hui Kyong. “Introduction: Race and/as Technology; or, How to Do Things to Race.” In “Race and/as Technology.” Special issue, Camera Obscura: Feminism, Culture, and Media Studies 70, vol. 24, no. 1 (2009): 6–35.

Cleaver, Harry. “The Zapatistas and the Electronic Fabric of Struggle.” In Zapatista! Reinventing Revolution in Mexico, edited by John Holloway and Eloína Peláez, 81–103. London: Pluto Press, 1998.

Coleman, Beth. “Race as Technology.” In “Race and/as Technology,” edited by Wendy Hui Kyong Chun. Special issue, Camera Obscura: Feminism, Culture, and Media Studies 70, vol. 24, no. 1 (2009): 177–207.

Daniels, Jessie. “Rethinking Cyber-Feminism(s): Race, Gender, and Embodiment.” In “Technologies.” Special issue, Women’s Studies Quarterly 37, no. 1/2 (Spring/Summer 2009): 101–24.

———. “Race and Racism in Internet Studies: A Review and Critique.” new media & society 15, no. 5 (2012): 695–719.

Dean, Aria. “Blackness in Circulation: A History of Net Art.” In The Art Happens Here: Net Art Anthology, ed. Michael Connor with Aria Dean and Dragan Espenschied, 394–99. New York: Rhizome, 2019.

Drew, Kimberly. “Towards a New Digital Landscape.” SuperScript, May 11, 2015. walkerart.org/magazine/equity-representation-future-digital-art.

Fernández, María. “Postcolonial Media Theory,” Art Journal 58, no. 3 (Autumn 1999): 58–73.

———, ed. Latin American Modernisms and Technology. Trenton, NJ: Africa World Press, 2018.

Gaboury, Jacob. “Becoming NULL: Queer relations in the excluded middle.” Women & Performance: A Journal of Feminist Theory, 28, no. 2 (2018): 143–58.

González, Jennifer. “The Appended Subject: Race and Identity as Digital Assemblage.” In Race in Cyberspace, edited by Beth E. Kolko, Lisa Nakamura, and Gilbert B. Rodman, 27–50. New York: Routledge, 2000.

———. “The Face and the Public: Race, Secrecy, and Digital Art Practice.” In “Race and/as Technology,” edited by Wendy Hui Kyong Chun. Special issue, Camera Obscura: Feminism, Culture, and Media Studies 70, vol. 24, no. 1 (2009): 37–65.

Hamilton, Amber M. “A Genealogy of Critical Race and Digital Studies: Past, Present, and Future.” Sociology of Race and Ethnicity 6, no. 3 (2020): 292–301.

Hammonds, Evelyn M. “New Technologies of Race.” In The Gendered Cyborg: A Reader, edited by Gill Kirkup, Linda Janes, Fiona Hovenden, Kathryn Woodward, 305–317. New York: Routledge, 2000.

Haraway, Donna J. Simians, Cyborgs, and Women: The Reinvention of Nature. New York: Routledge, 1991.

Hertz, Paul. “Colonial Ventures in Cyberspace.” Leonardo 58, no. 4 (1997): 249–59.

Indigenous Futurisms: Transcending Past/Present/Future. Santa Fe: IAIA Museum of Contemporary Native Arts, 2020.

Kafer, Alison. Feminist, Queer, Crip. Bloomington: Indiana University Press, 2013.

Keeling, Kara. “QueerOS,” Cinema Journal 53, no. 2 (2014): 152–57.

McGlotten, Shaka. “Black Data.” In No Tea, No Shade: New Writings in Black Queer Studies, edited by E. Patrick Johnson, 262–287. Durham: Duke University Press, 2016.

McIlwain, Charlton D. Black Software: The Internet and Racial Justice, from the AfroNet to Black Lives Matter. New York: Oxford University Press, 2020.

McPherson, Tara. “Why Are the Digital Humanities So White? or Thinking the Histories of Race and Computation.” In Debates in the Digital Humanities 2016, edited by Matthew K. Gold. Minneapolis: University of Minnesota Press, 2012. dhdebates.gc.cuny.edu/projects/debates-in-the-digital-humanities.

Menjívar, Jennifer Gómez and Gloria Elizabeth Chacón, eds. Indigenous Interfaces: Spaces, Technology, and Social Networks in Mexico and Central America. Tucson: The University of Arizona Press, 2019.

Nakamura, Lisa. Cybertypes: Race, Ethnicity, and Identity on the Internet. New York: Routledge, 2002.

———. Digitizing Race: Visual Cultures of the Internet. Minneapolis: University of Minnesota Press, 2007.

Noble, Safiya Umoja. Algorithms of Oppression: How Search Engines Reinforce Racism. New York: New York University Press, 2018.

Noble, Safiya Umoja and Brendesha M. Tynes, eds. The Intersectional Internet: Race, Sex, Class, and Culture Online. New York: Peter Lang, 2016.

Pagola, Lila. “Netart Latino Database: The Inverted Map of Latin American Net Art.” In The Art Happens Here: Net Art Anthology, 400–5. New York: Rhizome, 2019.

Paul, Christiane. Digital Art. 3rd edition. London: Thames and Hudson, 2015.

Rhizome. Net Art Anthology. 2019. anthology.rhizome.org.

Ruberg, Bonnie. Video Games Have Always Been Queer. New York: New York University Press, 2019.

Russell, Legacy. Glitch Feminism: A Manifesto. London: Verso Books, 2020.

Valentine, Ben. “Where Are the Women of Color in New Media Art?” Hyperallergic, April 7, 2015. hyperallergic.com/195049/where-arethe-women-of-color-in-new-media-art/.

An essay by Jorge Luis Borges begins with a fictional Chinese encyclopedia that divides animals into:

(a) belonging to the Emperor, (b) embalmed, (c) tame, (d) suckling pigs, (e) sirens, (f) fabulous, (g) stray dogs, (h) included in the present classification, (i) frenzied, (j) innumerable, (k) drawn with a very fine camelhair brush, (l) et cetera, (m) having just broken the water pitcher, (n) that from a long way off look like flies.[1]

When first reading this list of fanciful classifications, we might be amused that the natural divisions between animals appear to have been misunderstood, or disregarded. Upon further reflection, however, we may begin to question whether such divisions are really natural at all. In any classification system, the boundaries between categories are often debatable or fluid: historical time periods and musical genres are notoriously hard to pin down, for example. In other words, Borges’s Chinese encyclopedia draws on a racist caricature of Asian cultures as backwards to suggest the absurdity of all classifications. In addition to not being natural, classifications also are not neutral: they necessarily reflect what a society thinks is worth studying. In the West, modern encyclopedias first emerged during the European Enlightenment, when colonization and the building of empires drove the desire to organize knowledge systematically. One of Borges’s points is that such systems never transcend the cultures that produce them, despite their claims to universality.

The exhibition Difference Machines: Technology and Identity in Contemporary Art explores how this is true even in the case of the categories that describe our collective identities. We are all complex beings with real physical differences from one another, but it is society that determines how these differences are named, which is why identities often shift across space and time. Contemporary racial categories, for example, are not natural divisions in the human species but social constructions. There are differences between groups of people from different geographical locations across the world, but there is no stable group of observable or genetic traits that can be used to distinguish “Black” from “white” or “Asian.” Genetically, there are more differences within the so-called races than between them. While there is no scientific basis for race, this does not mean that race—or racism—does not exist. Nearly a century ago, W.E.B. Du Bois wrote that disregarding race because it is not based on fact does nothing to address its force in societies that have been shaped by colonialism and slavery.[2] As theorist Paul Gilroy writes, we are not born into a race, but racialized. Less a noun than a verb, race is a kind of technology for producing difference that has been used to justify the treatment of enslaved and colonized peoples and to affirm national identity.[3]

Like race, gender is also a social construct. Sex and gender are often conflated, but one’s sex is typically associated with one’s biology, while one’s gender is an identity—hence the varying genders described in different cultures and time periods.[4] As Judith Butler has written, gender is not an essential or innate trait: “gender is in no way a stable entity,” but rather “an identity instituted through a styled repetition of acts.”[5] Every day, we perform our gender through the way we dress, speak, move, and act towards others, in ways that are both deeply personal and conditioned by society. For example, cultural norms may dictate that it is more “natural” for a woman to accept commands and offer assistance than a man—which is precisely why the default voices of virtual assistants such as Siri and Alexa are feminine.

Similarly, while a preference for sexual partners is rooted in our biology, identities such as straight or gay are shaped by society. The term homosexual, for example, was not invented until the late nineteenth century, and its connotation rapidly shifted. Originally coined by an activist who was trying to fight laws that criminalized sodomy, the term was soon pathologized as a mental disorder tied to other criminal acts, such as pedophilia. In this sense, a label can be a condemnation that marginalizes certain behaviors. Over the past twenty years, however, labels including Lesbian, Gay, Bisexual, Transgender, and Queer have been embraced by many (though they are still eschewed by some, particularly outside Western frameworks). Formerly a pejorative insult, the term queer is now often seen as a unifying, positive identity. Michael Warner defines queerness as the opposite of heteronormativity, the beliefs and policies that position heterosexuality as the default sexual orientation.[6] Queerness can even question the value of rigidly categorizing sexuality, which historically has been the means used to police and control it.

Even the category of “disabled” is socially constructed. In her research on ableism, Fiona Kumari Campbell describes how laws, institutions, technologies, and other social forces distinguish normal from pathological and able-bodied from disabled identities.[7] As the word suggests, for a body to be described as dis-abled, it must be compared to another, able body. People only become “disabled” when living in a society that is not designed to accommodate how their particular body functions or performs work. Most disabled people choose to describe themselves as disabled, rather than “differently abled,” to underscore that one is actively “disabled” by social norms, just as one is “raced” and “gendered.” Still, as with race, gender, and sexuality, this label has real-world consequences, exposing individuals to prejudice and discrimination. Throughout the twentieth century, various marginalized communities (including African Americans and LGBTQ+ and disabled people) organized to demand their civil rights. Today, the fight for social justice continues, alongside calls to make mainstream politics and culture more inclusive. At the same time, demands for more representation can wind up reinforcing labels instead of interrogating how they relate to systems of power. In response, many people are now questioning the politics of representation, focusing more on how our identities are defined, how their boundaries are policed, and how they relate to systemic inequities. Some even argue that being required to conform to an identity in order to be “counted” is its own kind of oppression. Furthermore, asserting our identities can make us vulnerable to discrimination or tokenization, leading many activists to echo Michel Foucault’s assessment, in his work on the origins of modern surveillance and social control, that “visibility is a trap.”[8]

Although our collective identities can be a liability, some argue that they also can be a source of empowerment. In the 1980s, Gayatri Chakravorty Spivak used the term “strategic essentialism” to describe how marginalized people can temporarily organize themselves on the basis of shared identities—even if those identities are not really “essential” or intrinsic.[9] To cite one example, people with “invisible” disabilities who embrace the term “disabled” strategically challenge the idea that being disabled must be bad or shameful. Historically speaking, our identities also have been the basis for the shared cultural traditions that give meaning to many of our lives, from musical subcultures to holidays. In this way, identities can be sources of joy and pride, too.

[1] Jorge Luis Borges, “The Analytical Language of John Wilkins,” in Other Inquisitions 1937–1952, trans. Ruth L. C. Simms (Austin: University of Texas Press, 1964), 103. The passage was made famous by Michel Foucault, who credited it as the inspiration for his book The Order of Things: An Archaeology of the Human Sciences (New York: Pantheon, 1970 [1966]), xv.

[2] W.E.B. Du Bois, The Souls of Black Folk: Essays and Sketches (Chicago: A.G. McClurg, 1903).

[3] Paul Gilroy, Against Race: Imagining Political Culture Beyond the Color Line (Cambridge: Harvard University Press, 2000).

[4] That said, even our naming of biological sex is shaped by society.

[5] Judith Butler, “Performative Acts and Gender Constitution: An Essay in Phenomenology and Feminist Theory,” Theatre Journal 40, no. 4 (1988): 519–31.

[6] Michael Warner, Fear of a Queer Planet: Queer Politics and Social Theory (Minneapolis: University of Minnesota Press, 1993).

[7] Fiona Kumari Campbell, Contours of Ableism: The Production of Disability and Abledness (New York: Palgrave Macmillan, 2009).

[8] Michel Foucault, Discipline and Punish: The Birth of the Prison (New York: Vintage, 1995), 200.

[9] Gayatri Chakravorty Spivak, “Can the Subaltern Speak?,” in Marxism and the Interpretation of Culture, ed. Cary Nelson and Lawrence Grossberg (Chicago: University of Illinois Press, 1988), 271–316. While Spivak later disavowed the concept of strategic essentialism due to its cooptation by nationalist groups, it still helpfully underscores that identities can be a source of power.

Perhaps inevitably, Difference Machines reflects our long-standing commitment to experimental and socially engaged practices at the intersection of art and technology, as well as our own experience of difference. Paul Vanouse (who founded Coalesce: Center for Biological Art in 2015) is an artist whose projects since the 1990s have explored the cultural impact of emerging technologies. He is especially interested in how the technologies that we use to identify people, like fingerprinting and DNA analysis, shape our ideas about difference. A biracial Black man, his recent work includes using DNA samples from his own mixed-race family to explore the complex history of scientific racism. For most of her life, Tina Rivers Ryan has lived with a disabling condition that she manages with devices that continuously regulate her body’s chemistry via algorithms, transforming her into a literal cyborg.[1] Her research as a scholar has focused on the relationship between our bodies and technology, pushing back on transhumanist fantasies that it can separate our minds from our bodies and the identities that are attached to them. For both of us, this exhibition is political and also deeply personal. In our own ways, we each consciously inhabit identities shaped by digital tools. But everyone’s identities are now shaped by digital tools—regardless of whether you’re a digital native or Luddite. Art has become a way for us to make sense of the condition in which we find ourselves; we hope the works in this exhibition will do the same for you.

[1] The word cyborg is used here literally, not metaphorically. The classic theory of metaphorical cyborgs is Donna J. Haraway, Simians, Cyborgs, and Women: The Reinvention of Nature (New York: Routledge, 1991). Importantly, Haraway’s work has been critiqued as ultimately reinforcing the categories and hierarchies of difference that it purported to dismantle. See Julia R. DeCook, “A [White] Cyborg’s Manifesto: The Overwhelmingly Western Ideology Driving Technofeminist Theory,” Media, Culture & Society 43, no. 6 (September 2021): 1158–67 and Alison Kafer, Feminist, Queer, Crip (Bloomington: Indiana University Press, 2013).

3-D printing: The use of a special printer that produces physical objects from digital models, usually by additively secreting malleable plastics

Afrofuturism: A form of science fiction that incorporates elements of Black culture

Algorithm: A fixed set of instructions that a computer follows to perform calculations or solve a problem; all software programs are based on them

Algorithmic oppression: The creation and use of algorithms that perpetuate systemic oppression; coined by Safiya Umoja Noble in her 2018 book Algorithms of Oppression

Algorithmic risk assessment: The controversial use of algorithms to assess the likelihood that a convict will commit a crime again; the results, which are known to be biased against marginalized communities, are then used to determine their sentencing

Artificial intelligence: A software program that approximates human intelligence (including the ability to make decisions or answer questions) by processing large sets of data

ASCII: Abbreviation (pronounced “ask-ee”) of the American Standard Code for Information Interchange, a standard set of 128 letters, numbers, punctuation marks, and special characters represented by digital codes

Avatar: A visual representation of an individual’s identity on the internet or in virtual spaces; examples include Memojis and characters in Second Life

Big Data: Enormous databases of information (and especially our personal information) that are used to discover patterns; also the companies that create such databases

Bioart: A form of art that utilizes the materials and methods of the biological sciences

Biometric surveillance: A form of surveillance that depends upon the measuring of the body, such as fingerprinting, retina scans, and voice and facial recognition

CripTech: Short for “Cripple Technology,” or technology that is designed by and for disabled people

Cyberspace: A digital simulation of physical space, or, more generally, the overall “environment” of the internet; coined by William Gibson in his 1984 book Neuromancer

Cyborg: Short for “cybernetic organism,” or an organism whose body is regulated with the assistance of self-adjusting technology; first coined in the 1950s to describe mice with insulin catheters in place of their tails

Database: An organized compilation of information stored by a computer

Dead drop: A digital storage device that is left in a public or semi-public place so that people can anonymously download files from it

Difference engine: Charles Babbage’s mechanical calculator, first developed in the 1820s, which anticipated his analytical engine, the first general-purpose computing machine and the origins of the modern computer

Digital art: A term popularized in the 1990s to describe art that is made with digital tools, such as computers and the internet; now that most artists use digital tools at some point in their process, it may be more narrowly defined as art that is “about” those tools, in that it explores their operation, aesthetics, or cultural meaning

Digital colonialism: As used by artist Morehshin Allahyari since 2015, the use of digital technologies to claim ownership over another culture’s heritage; related to the term “electronic colonialism,” or the process by which technologically advanced countries assert their relative power over other countries, as described by Herbert Shiller in his 1976 text Communication and Cultural Domination

Digital divide: The gap between those who have access to digital tools such as computers and the internet, and those who do not

Digital double: The aggregate information that companies know about a specific individual based on the data they collect from that individual’s online activities, with or without their knowledge or consent

Digital native: Someone who has grown up with digital technologies and therefore may prefer them to their analog counterparts (although the term has been critiqued for reducing the variety of experiences of technology to generational difference); coined by Mark Prensky in his 2001 article “Digital Natives, Digital Immigrants”

Digital redlining: The process by which internet service providers offer inferior services or refuse service to marginalized communities based on their anticipated profits

Disability dongle: A typically expensive device or technology that engineers made without consulting disabled people and that disabled people either do not want or cannot afford

Facial recognition: A complex of technologies—including cameras, software programs, and databases—that allow computers to recognize and compare individual faces

Filter: In social media and photo-editing applications, a “layer” that can be added to a photo or video that transforms its appearance, particularly with the goal of making more attractive or amusing selfies

Filter bubble: A narrow selection of content that algorithms present to users based on their previous online interactions, which has the effect of reinforcing their current worldviews

Glitch: A technical malfunction; in art, the creative use of malfunctioning software or hardware

Hacking: Gaining unauthorized access to digital information; more broadly, using technology in ways other than its creators intended

Hactivism: The use and creative misuse of digital technologies to offer cultural critique and bring about social or political change

Handle: Colloquially, a name or nickname; in technology, a name that identifies an individual user’s profile on a particular internet platform, whether anonymous or mirroring the user’s real name

Identity tourism: The act of pretending to have a different identity on the internet, and especially in a way that replicates the exploitative power dynamics of colonialism; coined by Lisa Nakamura in her 1995 essay “Race In/For Cyberspace: Identity Tourism and Racial Passing on the Internet”

Indigenous futurism: A form of science fiction that incorporates elements of Indigenous cultures

Indigenous technology: Technology developed by Indigenous communities; examples include wampums and quipu

Internet: A computer network that uses standard protocols to allow computers to communicate with each other; the modern internet relies on the Transfer Control Protocol/Internetwork Protocol (TCP/IP), which was launched on January 1, 1983

Machinima: A portmanteau of “machine” and “cinema,” referring to animations made by recording inside videogames and other virtual worlds

Media art: An umbrella term for art that is made with media technologies, such as radio, film, television, video, digital games, and the internet. In the 1990s and early 2000s, the term “new media art” was used to specifically designate media art made using then-new forms of digital media; related terms include “variable media art” and “time-based media art”

Military-industrial complex: The network of individuals and institutions engaged in the production of weapons; coined by U.S. President Dwight D. Eisenhower in his Farewell Address on January 17, 1961

Net (or internet) art: Art made with and experienced on the internet; while sometimes used interchangeably, the term “net art” usually refers specifically to the internet art of the 1990s and early 2000s

Non-graphical interface: A way of interacting with a computer system that is based on texts exclusively; an interface that includes graphics is known as a graphical user interface, commonly referred to as a GUI, pronounced “gooey”

Operating system: The software that manages a computer’s software and hardware

Predictive policing: The controversial use of algorithms and databases to predict the likelihood that crimes will be committed in a certain area or time or by certain individuals or groups of individuals; the results, known to be biased against marginalized communities, are then used to increase surveillance, which leads to the infringement of civil rights

Profile: The presentation of a user’s information on a social media platform, typically including their photo, name or handle, personal information, and recent posts

Social media: Online platforms such as Facebook, Instagram, and Twitter that allow users to create and interact with social networks

Tag: In computing, a word or series of words used to associate content (including files or posts) with a shared topic or theme; the act of assigning a tag to content is called “tagging”

Techbro: A derogatory term used to describe the sexist men who work in technology and typically are dismissive of any social critiques of technology

Technochauvinism: The belief that technology can be used to solve any and every problem, which ignores the idea that technology itself has limits, or might be a problem in and of itself; coined by Meredith Broussard in her 2018 book Artificial Unintelligence: How Computers Misunderstand the World

Technocracy: A form of government or society that is run by technical experts according to the technological principles of efficiency and rationality

Technology: Applied science that addresses practical needs; examples include engineering, medicine, and computing

Technophobia: The condition of being afraid of technology; the opposite of technophilia, or the condition of loving technology

Text-based game: A game that asks the player to respond to questions or situations by selecting or writing texts, rather than interacting with graphics

Transhumanism: The belief that technology can be used to transcend the limitations of the human body and specifically that our minds can be separated from our bodies

Voice recognition: A complex of technologies—including microphones, software programs, and databases—that allow computers to identify, compare, and respond to individual voices

World Wide Web: An international internet protocol launched in 1989 that allows users to navigate between documents and websites via a “web” of hyperlinks

October 17, 11 am • Albright-Knox Northland

Explore Difference Machines with its curators, University at Buffalo Professor Paul Vanouse and Albright-Knox Assistant Curator Tina Rivers Ryan.

November 1, 6:30 pm • Center for the Arts Room 112, UB North Campus

Artist Sean Fader, whose work is featured in Difference Machines, presented an overview of his artistic practice as part of the University at Buffalo Department of Art Visiting Artist Speaker Series.

This event was presented via Zoom.

November 5, 6 pm • Virtual

Join us for a free virtual screening of the award-winning 2020 documentary Coded Bias, which surveys the fight to ensure that artificial intelligence (AI) systems don't infringe on our civil rights. The screening will be followed by a panel discussion with Difference Machine artist Rafael Lozano-Hemmer, whose works consider the politics of facial recognition technologies, and Squeaky Wheel Curator Ekrem Serdar, who organized the recent exhibition Johann Diedrick: Dark Matters, which explores how AI systems underserve Black communities. Watch a recording of the discussion below.

November 8, 6:30 pm • Virtual

Artist Stephanie Dinkins, whose work is featured in Difference Machines presented an overview of her artistic practice as part of the University at Buffalo Department of Art Visiting Artist Speaker Series.

This event was presented via Zoom.

December 10, 6 pm • Virtual

Albright-Knox Assistant Curator Tina Rivers Ryan, who co-organized Difference Machines, gives an introduction to NFTs (non-fungible tokens) and the recent emergence of new markets for digital art. An art historian who has written extensively about the development of digital art since the 1960s, she also sketches how NFTs relate to digital art's past and future. Watch a recording of the talk below.

January 7, 6 pm • Virtual

Dr. Margaret Rhee and Dr. Cody Mejuer presented about the work of the University at Buffalo–based Palah 파랗 Light Lab, a collective of transdisciplinary artists and scholars who explore the intersection of social justice and technology. They will be joined by Difference Machines co-curators Paul Vanouse and Tina Rivers Ryan to discuss common themes between the Lab's work and the exhibition, and how we can use art and technology to advance social justice locally. Watch the presentation below.

January 14, 6 pm • Virtual

This special live virtual performance was given by Difference Machines artists Mendi + Keith Obadike, who are known not only for their early internet–based art projects, but also for their pioneering work as sound artists.

Difference Tones was a sound and video performance presented via the virtual space of Zoom and a meditation on the idea of difference. It centered on the play and interactions between two channels of sound and two images in a single channel of video. The title is taken from the field of acoustics: when two notes are played simultaneously, a third “difference tone,” or imaginary note, is faintly heard.

Buffalo Game Space • November 21, 2:30 pm

Buffalo Game Space member Joan Nobile led a conversation about how the organization strives to create a place for the community to learn and explore technology and game development.

GLYS Western New York • December 19, 2:30 pm

Visitors joined members of GLYS Western New York for a conversation about their work providing a safe space for LGBTQ+ youth and their relationship to technology.

Devonya Havis • January 16, 2:30 pm

Dr. Devonya Havis, University at Buffalo Center for Diversity Innovation Distinguished Visiting Scholar, 2021–22, discussed her research exploring the intersections of race and technology.

Made-to-Order Poems with Just Buffalo Literary Center • November 14, 10:30 am–3 pm

Join Buffalo Center for Arts and Technology • December 12, 10:30 am–3 pm

Drop-in art activity by Squeaky Wheel • January 9, 10:30 am–3 pm

Difference Machines is a "Must See" exhibition, according to ARTFORUM's art guide.

"Nostalgic desires for a world before computers or the internet don’t help us address the social and political effects of increased reliance on them. Difference Machines demands that audiences take seriously the presence and effect of these technologies," writes The Brooklyn Rail's Charlotte Kent.

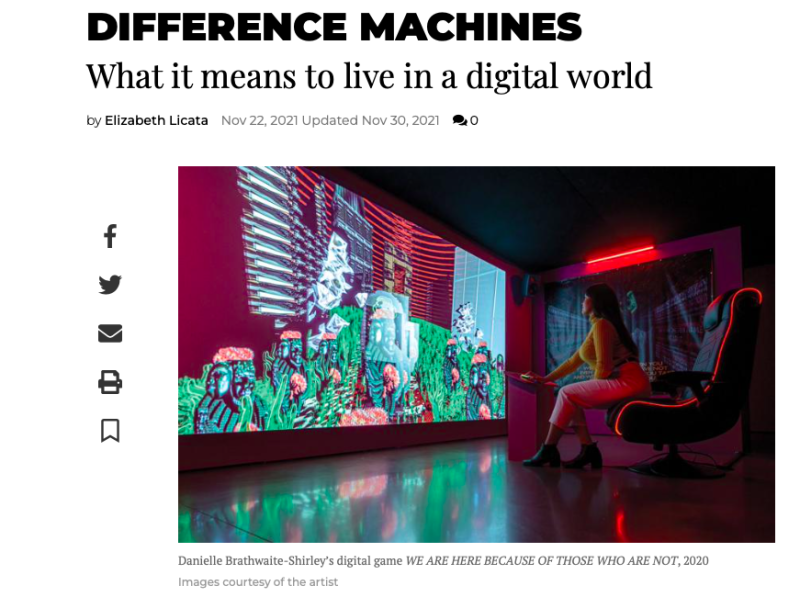

"The show’s basic premise is this: technology is informed by the biases and assumptions of its creators and the society in which they operate. It should come as no surprise that facial recognition systems, search algorithms, and databases reflect existing prejudices," writes Buffalo Spree's Elizabeth Licata.